Actions to take: Rate interview candidates on a question-by-question basis. Train yourself and encourage others to focus on factual information from a candidate's answer rather than anything stylistic about their answers. Develop or encourage your organization to develop rating scale descriptions that give meaningful guidance on how to rate.

Most organizations do not have an effective method for deciding who to hire at the end of the interview process. There are a variety of reasons why, not least among them is the historical context. It has only been in the last 30 years or so that the introduction of behavioral economics as a field of study started to reveal human tendency for bias and inability to make fully rational decisions. Prior to that, decisions were based on the subjective impression of the hiring manager, and the entire process was built to facilitate their choice. While plenty of organizations have begun to make changes that correct for these errors, we still have a long way to go.

Organizations have made strides in terms of codifying the interview process to ensure that it is consistent across candidates. "Clever" interview questions that were popular in the early 2000's are being replaced with scientifically validated behavioral interview questions. Hiring panels are being trained on implicit bias issues.

One area where we still have a long way to go, however, is our process for making the final decision.

Imagine an instructor grading final exams for a university course. They read the student's entire exam, think about it for a while, maybe chat with others who have read the entire exam, and then decide holistically on a score out of 100. "This one feels like a 92 out of 100, that one feels like an 85 out of 100." That sort of thing.

Would anyone consider this to be a fair assessment of the student's skill? Of course not. It's absurd on its face. Yet this is how a great many hiring managers make their hiring choices. It's worse, actually. Using our exam analogy, it would be more along the lines of the professor reading all the exams before deciding on a score for any of them. We go through all of the interviews. Then, only at the end, do we try to make an assessment of who did best.

The best, fairest, and most effective way to rate interview candidates is the same as the best, fairest, and most effective way to rate a final exam: do it item by item, with a clear rubric and criteria for grading, intentionally leaving out anything that is not explicitly job related such as your "feel" of the candidate. Again, think about how angry you would be at a professor who moderated scores based on their "feel" of the student's knowledge. We should be just as incensed by managers who operate by gut, or instinct, or their impression, or whatever else you want to call it. Yet, somehow, "Wow, I got great vibes from them" is a totally acceptable thing to say on an interview panel.

When I say you need to rate your candidates "item by item," Here is exactly what I mean:

- Have each interview panelist score the candidate's response to each question,

- Using a pre-defined rating scale, such as 1-5,

- Immediately after the candidate is finished with the question,

- Without speaking to or otherwise being influenced by the other panelists

As dry and procedural as it sounds, simply adding up the scores and selecting the highest scoring person is the best method for choosing who to hire. Virtually every other process accommodates or even encourages the decision to be influenced by irrelevant (aka biased) information. The fact is, our rating process should be more about actively pushing away some sources of information, not about gathering as much information as possible. More information is not better information. As we have talked about, this is a hard pill for some bosses to swallow. Nevertheless, it is true.

We stick to the simplest process, rating each question one at a time, to safeguard our decision from as much irrelevant information as possible.

(To everyone saying "But what about the human touch?" By all means, ask all the questions you like about teamwork or conflict resolution or any other interpersonal skill. Then rate the answers, not your brief impression of the person.)

Some of you are saying, "My organization has a scale, so I guess we're fine." You are probably not fine. It is not enough to merely have a scale. You need to train your interview panelists and hiring managers on exactly how to rate.

If you just tell people, “Rate the candidate’s answer on a scale of 1 to 5,” you will get all sorts of variation in how people use the scale. "5" for one person might be "3" for another. Many people will never rate anyone a 1 because there is a vague unease with giving a person the lowest score. I've seen this happen even when the candidate didn't have an answer at all—the rater gave them a 2 because the candidate "tried."

There is a second, bigger problem the occurs when you don't train on rating. In our post on why most interview question fail, we talked about how some questions end up soliciting good-sounding-but-irrelevant information. We explained that these questions fail because they reward people who speak well in interviews rather than those who do well on the job. Untrained raters will fall into this trap even with well-designed interview questions. They will give high ratings to people who speak eloquently, even if those people ultimately say nothing of substance in their answer.

You need to train yourself and others to look for materially important details about how the candidate did the work. Watch the answer for factual information. What exactly was the candidate responsible for? How exactly did they proceed in their example? What factors did they consider in their decision-making process? Is the way they acted similar to how you would want them to act in your organization? That is what matters, not how well they strung together their words. When I train on this, I will show recordings of answers to interview questions: one where the speaker is very on-the-ball and well spoken, but ultimately gives little or no information about their work; one where the speaker muddles through the answer but has real content about how they performed some aspect of a job. Before being trained, people invariably rate the first video higher than the second.

Your organization should create meaningful descriptions of each score. Most rating systems give about this level of detail: "Rate 1 to 5, where one is a poor answer and 5 is an excellent answer." This doesn't guide interviewers in any meaningful way. People have different notions about "poor" and "excellent." Give clear criteria about what constitutes a 1, what constitutes a 2, etc. to prevent the inconsistency.

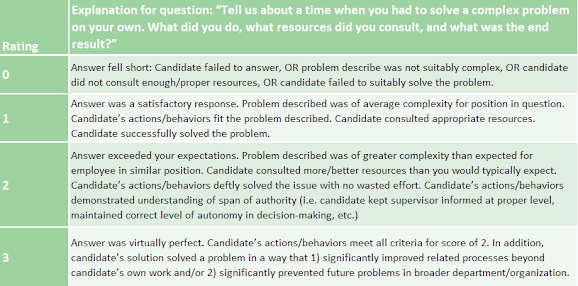

Truly going the extra mile, an organization would be best served to create these descriptions for each type of question you will be asking during the interview. For instance, here is a rating description for questions that probe a candidate's problem solving.

Take a look at the level of detail in this rating description. It is clear when the candidate must be given the lowest score. Even your kindest-hearted interviewers will be compelled to give that score when appropriate with this level of guidance on how to rate. Same thing on the other end. There are specific criteria that must be met before the highest rating can be given. Will there still be variation among the raters? Some, yes. But if that's true for this rating system, imagine how useless a basic "rate 1 to 5" scale is.

A set of rating descriptions like this will make your interviewers far better at their jobs. They will be listening for whether or not the candidate answer meets these criteria, which means they will focus on the content of the candidate's answers, the factual information that meets or fails to meet the criteria. Because they will be focused on relevant information, there will be less room for biased or irrelevant information to influence hiring decisions. In short, your organization will hire better.

In our article about managers' overconfidence in hiring instincts, the capstone advice was to "develop and adhere to hiring processes that are specifically crafted to minimize bias and fallibility in human decision-making." Hopefully this post will be a start on your journey to more effective rating practices.

No comments:

Post a Comment